Bringing AI to the Physical World: Top 5 ESP32 OpenClaw Projects & Hardware Ecosystem

The AI landscape shifted dramatically in early 2026 with the explosive rise of OpenClaw—the autonomous, open-source AI agent framework. But the real revolution isn't happening in expensive cloud server racks; it's happening on the edge. Hardware developers worldwide are doing the impossible: squeezing a fully functional, persistent AI agent loop into a $5 microcontroller.

By combining the OpenClaw architecture with the Espressif ESP32 series, developers are creating pocket-sized AI companions that can interact with the physical world 24/7. Today, we are exploring the top 5 GitHub projects driving this trend, the undeniable charm of ESP32 development, and the essential peripheral BOM you need to build your own.

The Top 5 ESP32 OpenClaw Projects on GitHub

Based on recent GitHub traction, here are the top 5 repositories leading the embedded OpenClaw revolution:

1. memovai/mimiclaw (MimiClaw)

-

The Pioneer of Pocket AI: MimiClaw is arguably the most famous bare-metal implementation. It abandons heavy operating systems like Linux or Node.js, running entirely in highly optimized C on an ESP32-S3.

-

Highlight: It features a complete ReAct-style agent loop, connects to Telegram, and uses the ESP32's flash storage (via simple

.mdfiles) for persistent memory across reboots.

2. hrwtech/openclaw-esp32

-

The Standard Hardware Port: This repository serves as a highly versatile bridge between the core OpenClaw node architecture and the ESP32 ecosystem.

-

Highlight: It excels in its robust API gateway integration, making it exceptionally easy for developers to add custom hardware tool-calls (like toggling relays or reading environmental sensors) directly from the LLM prompt.

3. tuya/DuckyClaw

-

The Commercial-Grade Fusion: Built on top of the TuyaOpen C SDK, DuckyClaw is an edge-hardware-oriented version of OpenClaw designed for serious IoT deployments.

-

Highlight: It provides seamless device-cloud fusion. DuckyClaw abstracts the complexity of integrating diverse peripherals (from displays to speaker-mic audio) into ready-to-use building blocks, making it ideal for enterprise IoT applications.

4. EvolvingAgentsLabs/RoClaw

-

The Dual-Brain Robot: Taking OpenClaw into motion, RoClaw uses an ESP32-S3 as a "motor controller cerebellum" alongside an ESP32-CAM.

-

Highlight: The AI agent analyzes visual data from the ESP32-CAM and compiles bytecode on the fly to drive stepper motors, allowing a 3D-printed chassis to autonomously navigate the physical world based on semantic commands.

5. pycoClaw (MicroPython Implementation)

-

The Developer-Friendly Agent: For those who prefer Python over C, this project brings a fully-featured OpenClaw-compatible agent loop to MicroPython on the ESP32-S3.

-

Highlight: It features a non-blocking dual-loop asynchronous architecture and natively supports LVGL touchscreen UIs, proving that MicroPython can handle complex, multi-step LLM reasoning on the edge.

The Magic of the ESP32: Why Squeeze AI into a $5 Chip?

You might ask: Why not just run OpenClaw on a Raspberry Pi or a mini PC? The charm of ESP32 chip development lies in three core advantages:

-

Ultra-Low Power & Always-On: An ESP32-S3 consumes less than 0.5W. It can run off a tiny LiPo battery or a standard USB port 24/7. Your AI agent is always awake, listening, and ready without racking up energy bills.

-

Direct Physical Control (GPIO): Unlike cloud VMs or PCs, the ESP32 has direct access to the real world. When the LLM decides to "turn on the lights" or "check the temperature," the ESP32 can immediately trigger a relay or read an I2C sensor. It bridges the digital-physical divide with zero latency.

-

Incredible Cost-Efficiency: Building a fleet of autonomous edge agents on single-board computers (SBCs) is expensive. At under $5 for an ESP32-S3 module (with 16MB Flash and 8MB PSRAM), developers can deploy dozens of AI nodes across a smart home or factory floor for the cost of a single Raspberry Pi.

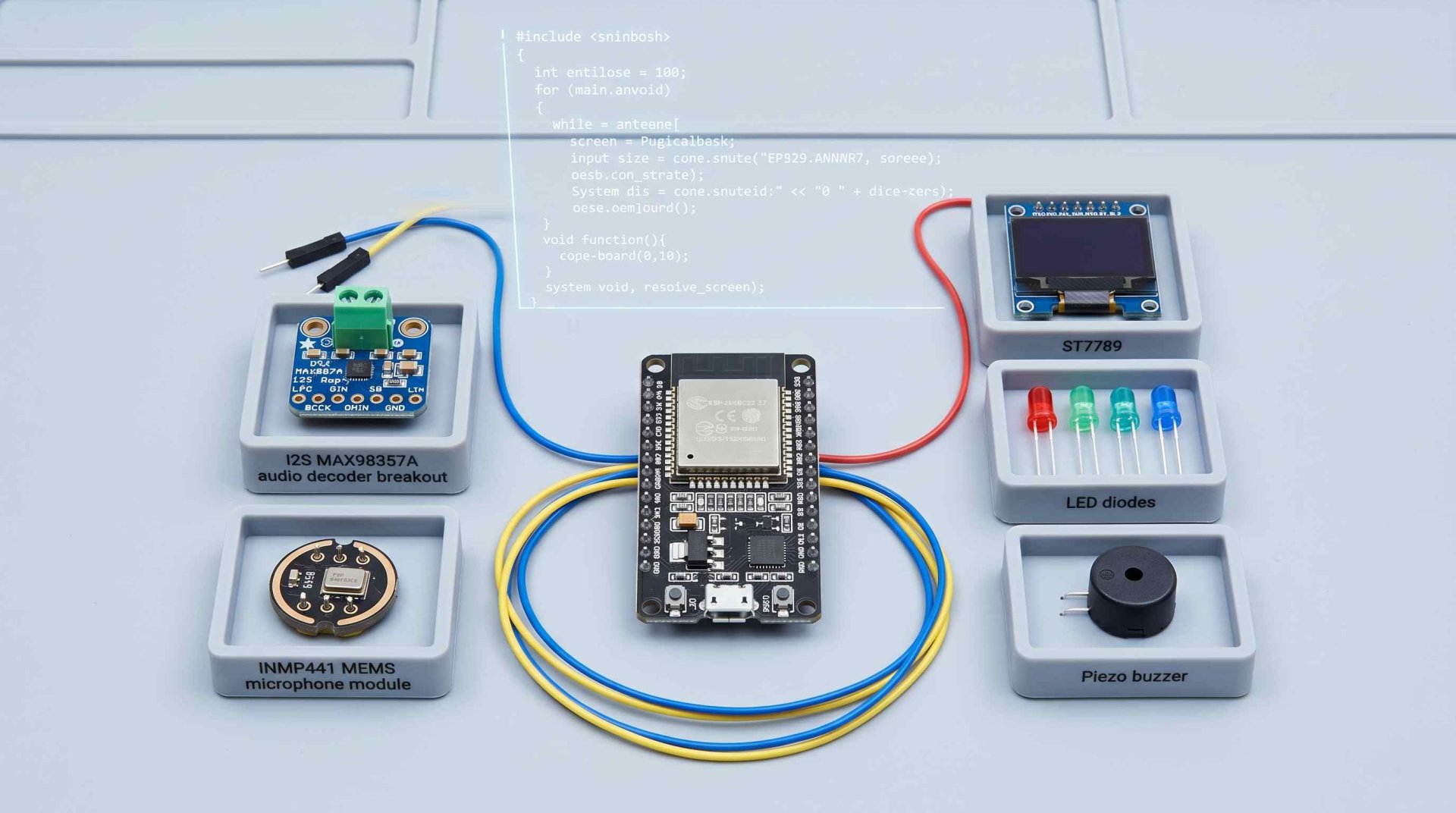

Giving Your AI Senses: The Essential Peripheral BOM

An ESP32 running OpenClaw is just a silent brain without peripherals. To build a truly interactive AI companion (like the ones seen in MimiClaw or DuckyClaw), hardware engineers need to integrate specific I/O components. Here is the core BOM you need to bring your agent to life:

1. Hearing: I2S MEMS Microphones (e.g., INMP441)

Text-based Telegram chats are great, but Voice-to-Text makes the agent feel alive. The ESP32 utilizes its I2S interface to stream high-fidelity digital audio directly from an INMP441 omnidirectional MEMS microphone, capturing your voice commands flawlessly.

2. Speaking: I2S Audio Amplifiers & Speakers (e.g., MAX98357A)

To let OpenClaw "talk back" using Text-to-Speech (TTS) APIs, you need an I2S Class-D amplifier module like the MAX98357A. It takes the digital audio stream from the ESP32 and directly drives a 3W miniature speaker, delivering crisp, clear synthesized voice responses.

3. Expressing: SPI/I2C Displays (TFT LCDs & OLEDs)

An AI needs a face. Projects utilizing LVGL (like PycoClaw) rely on displays such as the ST7789 (IPS LCD) or standard SSD1306 (OLED) to show agent status, display animated facial expressions, or print out multi-step thought processes in real-time.

4. Alerting & Feedback: Buzzers and LEDs

Even the most advanced AI needs basic hardware interrupts and status indicators.

-

Active/Passive Buzzers: Driven by the ESP32's PWM (Pulse Width Modulation), a simple buzzer can play varying frequencies to alert you when a task is completed, an error occurs, or the LLM is "thinking."

-

RGB LEDs (WS2812B): A single NeoPixel LED ring can act as a visual status indicator (e.g., pulsing blue for processing, solid green for idle, red for network errors), mimicking the aesthetic of high-end smart speakers.

Source Your AI Hardware Ecosystem with Ebee

The shift toward Edge AI and MCU-based agents like OpenClaw is creating a massive demand for specific hardware components. Prototyping is fun, but scaling a project from a breadboard to a manufactured PCB requires a reliable supply chain.

At Ebee, we provide global electronic component distribution for the AI hardware revolution. Whether you need bulk reels of ESP32-S3-WROOM-1 modules, highly sensitive MEMS microphones, display drivers, or standard passives like buzzers and LEDs, Ebee guarantees stable lead times and transparent spot-market pricing.

Ready to build your own pocket AI agent? Don't let missing peripherals slow down your development. Upload your complete project BOM to Ebee today, and let our experts handle your procurement from prototype to mass production.